- Home

- |

- Services

- |

- Cybersecurity

- |

- GuardAI

Build reliable and trustworthy models

GuardAI is a platform for testing machine learning models against the failure modes they encounter in production, such as edge cases, unexpected input data, poisoned input data, and adversarial attacks. GuardAI integrates with your existing MLOps workflows and enables effortless and reproducible quality testing of ML models, improving their trustworthiness and reliability.

GuardAI version 1.0 is created for verifying the safety and security of AI models in ADAS, Autopilot, and Autonomous driving systems, but can also be used for all computer vision tasks in IoT, banking, cybersecurity, and manufacturing industries. We believe that our GuardAI platform can help make AI safer and more secure for developers, engineers, product managers, and end-users.

KEY FEATURES

A focus on safety-critical system testing

- Natively supports automotive AI models

- Test automotive model reliability with camera-specific distortions and challenging weather conditions

- Test attack robustness with adversarial machine learning

- Verify training data with data poisoning tests

State of the art attacks, consistently updated

- Rapid development of new noises, corruption tests, and adversarial attacks, based on the state-of-the-art and internal research

- Hyperparameter optimization

- Auto scalability in cloud and enterprise infrastructure

Easy to use, practical and insightful

- Easy-to-use GUI-based platform for tests, evaluations, and results overview

- API interfaces for simple integration in existing MLOps pipelines

- Jupyter Notebooks, SDK & documentation for integration support

WHY GUARDAI?

SUPPORTED NOISES

Over 40 camera-specific noises, challenging weather conditions, and various corruption noises:

- Camera-specific noises

- Camera distortions

- Sensor noise

- Perspective

- Challenging weather conditions

- Snow, rain, blur, fog, sun flares, and more.

- Corruption noises

- Classic corruption. including Gaussian and Poisson.

- Pixelation, compression, and more.

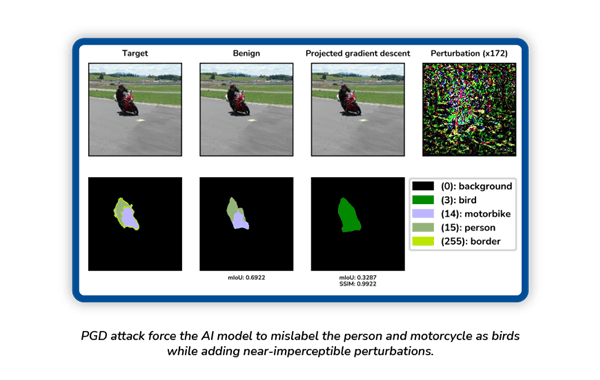

ADVERSARIAL ATTACKS

We support a wide range of adversarial attacks, including

- Auto Projected Gradient Descent (AutoPGD)

- Basic Iterative Method (BIM)

- Dense Adversarial Generation (DAG)

- DeepFool

- Shadow Attack (ShA)

- Boundary Attack (BA)

- Geometric Decision-Based Attack (GeoDA)

- Square Attack (SA)

- CopyCat

- Configure and add custom adversarial attacks

Find the full list of attacks on our platform.

Test with your preferred framework

GuardAI supports various popular machine learning frameworks, including PyTorch, Keras, ONNX, and TensorFlow, as well as main computer vision tasks, including Detection, Segmentation, and Classification.

GuardAI allows you to add and configure custom models in all of the listed frameworks

MULTIPLE DATASET FORMATS

For Classification

- MNIST

- CIFAR10

- ImageNet

For Segmentation

- VOC Pascal Segmentation

- Mapillary

- COCO Segmentation

For Detection

- VOC Pascal Detection

- COCO Detection

- YOLO

GuardAI allows you to add and configure custom datasets.

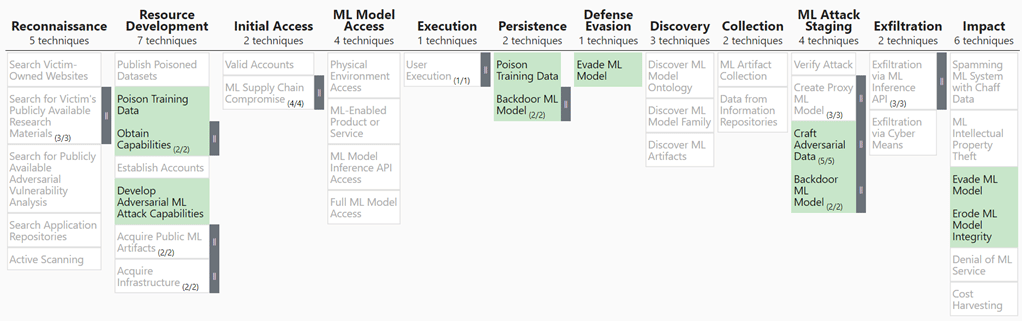

MITRE ATLAS™ mapping

The GuardAI platform can be used to test your AI system’s robustness against many adversary tactics and techniques, as mapped by the MITRE ATLAS framework.

ATLAS is a knowledge base of known adversary tactics and techniques for ML systems, organized in columns progressing from left to right. The columns signify the timeline of an attack, i.e., the steps adversaries must complete to achieve their objective.

The threat landscape covered by GuardAI is shown below, highlighted in green.

More from our Cybersecurity Team

In addition to the GuardAI platform, our Cybersecurity team offers more services that help safeguard connected technologies:

- Simulation of real-life adversarial attacks against ADAS and Autopilot Systems

- Testing of defenses against adversarial attacks

- Penetration testing for AI platforms

- Intellectual property protection of AI platforms, models, and datasets

Let's empower secure AI

Register with us to get your exclusive invitation to test AI models using GuardAI.